Project summary

The project represents a system designed to simulate the behavior of a physical object under specific conditions. The project had already been functioning at the time JazzTeam joined it to increase the product stability and improve development processes.

The project represents a system designed to simulate the behavior of a physical object under specific conditions. The project had already been functioning at the time JazzTeam joined it to increase the product stability and improve development processes.

Instability was one of the most important and difficult problems. Deliveries were irregular, it was difficult to predict the application behavior when the code was modified. Therefore, first of all, CTO of JazzTeam took a decision to implement automated testing within the CI/CD system.

The main reasons for introducing automated testing were as follows:

- The team often faced a situation when changes in one application module caused regression bugs in another application module.

- The application performs multiple calculations, so Unit testing is the most logical solution for quality assurance.

Each instance of the application is unique, has its unique serial number and USB hardware key, so it was an important factor affecting the entire CI/CD system. This imposes the following restrictions:

- The need to work with hardware keys.

- Different behavior of a protected and unprotected application.

- The inability to conduct full-fledged automated testing of the application at the developer’s workplace.

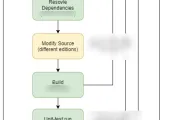

Continuous Integration and Continuous Delivery

As part of Continuous Integration the main objective was to implement daily automated quality control via Unit and UI testing. It was needed to achieve the following important strategic goals:

- Make sure that at almost any point of time there is a workable build of the application containing the latest changes.

- Make the application stable. New functionality shouldn’t break the product.

- Reduce the laboriousness of bug fixing and delivery of new applications.

As part of Continuous Delivery the main goals were as follows:

- Delivery process automation.

- Human factor influence reduction.

- Improve the process of testing ready-made application instances before their delivery.

Setting up processes

To implement the CI/CD practice, it was also required to set up management processes. Work process organization – from tasks setting to testing, implementing Scrum, changing the team’s mindset.

Before JazzTeam joined the project, it was a normal practice to do everything quickly, without documentation and elaborated testing. Naturally, much time was spent for the support due to regular problems emerging during the delivery. Three months later the development team began to reduce the technical debt and maintain the necessary documentation, while the speed of creating new functionality remained the same. As a result, the number of delivery problems significantly reduced.

A mandatory step in the development of new functionality was its coverage with Unit tests and UI automated tests.

Key achievements on the project during the first six months were:

- Significant reduction of risks associated with bugs occurrence, when changes were introduced in the existing code.

- Improvement of the psychological climate among teammates. Increased motivation to accumulate best practices and understanding that efforts of each person really make the product better.

- More competent work with the legacy code, when at first the component is completely covered by integration and UI tests and only after that changes are made to it. This made the development process more transparent and predictable.

- Reduced laboriousness of the development process associated with early detection of bugs and automation of software delivery.

-

1Establishing a value-based approach to the development process Organizational, managerial and technical consulting

-

2Setting up the processes Engaging our company's manager to manage the project, implementation of Scrum and test management optimization

-

3Changing the team's mindset Self-organization of the team, implementation of the best engineering practices and approaches, overcoming learned helplessness, constant work with technical debt

-

4Automation of application development lifecycle CI/CD Implementation

-

5Quality assurance for the product Implementation of UI/Unit testing practice by developers using the DDT approach

-

Barriers to regular building up of the product's functionality were eliminated

-

QA processes were organized, Scrum and CI/CD were implemented

-

Release speed was increased by 5 times

-

Stable functioning of the product, the customer was confident in the quality of each delivery

-

A value-based approach to the development process was formed, the psychological climate in the team was improved

Unit and integration testing

Well managed testing allowed changing the team’s view of the development process. Now, when fixing a bug, it is an element of good style to completely cover this part of the code with tests in order to eliminate the risk of bug reoccurrence. Also, as mentioned above, when seriously interfering in an already working component, it became best practice to cover it with tests first and only after that introduce changes in it.

Since the system works with scientific data, an active use of Data Driven tests became an important area of testing. So, we were able to involve the entire project team in the testing process.

After each sprint, metrics on changes in code coverage by tests are recorded, so the code coverage trend is tracked. We use the following two key parameters as reference metrics: code lines coverage and condition coverage.

Progress is not fast, as the project has a very large legacy code base – several hundred thousand lines of code. Therefore, coverage increased by only 2 percent in two months. However, the number of Unit tests increased by 25% in absolute figures. Surely, these are not the most impressive figures, but our main goal was to change the team’s approach to writing Unit tests and integration DDT tests. And we achieved this goal!

UI testing

The process of UI tests implementation started with a practical investigation conducted by the team. The essence of the study was to choose two UI testing frameworks and write a few UI tests using each of them. Then it was required to compare the results and choose the only one of the frameworks. For this project we decided between free Appium and White frameworks. Upon practical tests we chose White framework.

The process was organized as follows: the developer allocated 5 hours a week for the tasks related to writing UI tests. The results were tracked every day at the stand-up and entered into a special table, which was printed out and placed near the Kanban board.

At the first stage only Smoke UI tests (basic, most important) were written, which, however, allowed us to check the performance of the finished application instance for the customer in automatic mode, and not manually as before.

At the second stage we implemented writing UI tests as one of the criteria intended to check feature readiness for testing. As far as new features were developed, it allowed to gradually cover the entire functionality of the application with UI tests.

A completely new practice for the project was to create a test instance of the application with hardware protection enabled. At the same time a test USB security key is flashed. After that the test application is installed on a separate machine and then the fully ready-made application is tested in the operating environment using UI tests. This made it possible to identify possible defects at an earlier stage. It also made the development process faster, more transparent and predictable.

Before that, to test the application with an already working protection system and all the components, the developer had to create it first. It required much effort and was performed only at the end of the development process, before submitting the application for testing. Now the developer can run UI tests on the ready-made application at any time. Taking into account the minimum labor intensity, this became an element of good style. Also, UI testing became mandatory before merging into the main development branch.

Release management

The introduction of Continuous Delivery greatly simplified the process of distributives creation. Any user, not just the developer, can create a new instance of the application.

In addition, automated testing of new copies of the application significantly increased the productivity of the team and made it possible to release twice as often.

Technologies

Stack: .NET, C#, C++, Managed C++.

Infrastructure: Windows, Jenkins.

Test Automation libraries: Appium, White.

Screenshots

Project features

- Hardware protection system, which imposes a lot of restrictions on testing the application in the workplace.

- Complex product delivery system. Creation of an individual application instance for each hardware key.

- High technology product, very high requirements for knowledge in the subject area. A common situation is when a developer does not know the expected result and actively involves business analysts and researchers in the development process.

- High coherence of the legacy code and, as a result, great complexity associated with adding new functionality and time-consuming refactoring.

Project results

After CI/CD implementation we achieved the following results:

- Up-to-date main working branch of the application with an workable build of the application.

- We significantly reduced the laboriousness of bug fixing operations and delivery of new application instances.

- We significantly reduced the impact of the human factor with the help of delivery automation and daily automated testing.

Company’s achievements during the project

- A significant increase in the development speed.

- The customer made certain that correct organization of the testing and Continuous Integration process also works on a desktop application with hardware protection.

- Full involvement of the customer’s team in writing UI and Unit tests.

Clients about cooperation with JazzTeam